Federated Learning from M-States: Aggregating Implicit Memories Across Agents

Federated Learning from M-States: Aggregating Implicit Memories Across Agents

This post is an early-stage research outline. We're sharing our thinking to invite collaboration and feedback.

The Setup: Reproduction in the Evolutionary Loop

The Dialectical Agent builds belief trees and runs debates — the contest where ideas compete. HEXIS provides the genetic material — implicit memory states that encode an agent's accumulated experience as attention perturbations, shaping what they notice and how they reason without explicit retrieval. Bayesian belief updating is the fitness function — credences shift based on which arguments survive the selection pressure of structured disagreement.

Federation is the missing piece: reproduction. How do you take a population of agents — each carrying M-states shaped by dozens of debates, each with nuanced credence distributions across hundreds of beliefs — and combine their experience into something greater than any individual?

Consider the loop so far: a BeliefRefiner builds a tree of beliefs about a resolution. PerspectiveBuilder creates side-specific views. These perspectives are encoded as M-states via HEXIS and delivered to debaters. The debate runs. Judges update the belief tree through Bayesian conditionalization. After many debates, each agent — debaters and judges alike — carries M-states encoding experience-specific dispositions. A judge who watched 50 debates on economic policy has different attention patterns toward economic evidence than one who specialized in ethics cases.

Now what? Without federation, these insights stay trapped in individual agents. With federation, the population's collective experience gets compressed into higher-order representations that augment everyone's future debates. This is where evolution happens — combining successful traits from the population while preserving the diversity that makes multi-agent debate productive.

Why This Matters

The Diversity Problem

Current approaches to multi-agent AI systems face a tension between specialization and generalization:

- Shared weights: All agents behave identically. No epistemic diversity.

- Independent fine-tuning: Each agent diverges. No knowledge transfer.

- Ensemble methods: Combine outputs, not representations. Lose the mechanism-level insights.

M-states offer a middle path. Because they operate as perturbations on a frozen base model (Q' = Q(I + M_A M_B^T)), they are naturally composable — you can add, average, interpolate, or selectively apply M-states without modifying the underlying model.

The Privacy Argument

In many settings, the experiences that shape an agent's disposition are private or proprietary: a legal AI trained on privileged case files, a medical AI shaped by patient interactions, a debate agent whose strategic insights came from a specific opponent's weaknesses. Federating M-states lets us share the effect of experience (how attention patterns shift) without sharing the experience itself (the raw text that produced the shift). The write function φ is a one-way compression: you can produce M from experience, but you cannot reconstruct experience from M.

This isn't just a practical convenience — it's a structural property of implicit memory. The M-state is not invertible back to the experience that produced it, just as you can't reconstruct the specific fearful situations that formed someone's courage by observing their courageous behavior. The hexis is a one-way compression of experience into disposition. Compare this to federated learning on full gradients, where gradient inversion attacks can reconstruct training data because full gradients preserve too much information about the input. M-states are far more compressed — they capture not "what the model learned to predict" but "how the model's attention geometry shifted," a much more abstract representation.

The rank of M even controls the privacy-utility tradeoff directly: a rank-1 M-state is maximally compressed with almost no information about the original experience surviving, while higher rank preserves more experiential structure but potentially leaks more. This could be formalized as a differential privacy bound parameterized by M-state rank.

Research Directions

1. M-State Arithmetic

If M-states are low-rank perturbations in query space, can we do arithmetic with them?

Hypothesis: M-state operations should mirror the semantic operations they encode.

- Addition:

M_debate + M_empathy→ an agent that debates with empathetic awareness? - Subtraction:

M_experienced - M_novice→ the delta of expertise? - Interpolation:

α·M_aggressive + (1-α)·M_cautious→ controllable strategic disposition?

Early evidence from HEXIS v6 (interpolation experiments) suggests this partially works — interpolating between career-related M-states produces blended dispositions. But the relationship is not linear, and composition of unrelated M-states often produces interference rather than combination.

Open question: Under what conditions does M-state arithmetic preserve semantic coherence? Is there a basis in which M-states decompose into independent factors?

2. Federated Aggregation

Classical federated learning aggregates model gradients:

M_global = (1/N) Σ M_i (FedAvg)

But M-states aren't gradients — they're functional perturbations. Averaging in parameter space may destroy functional structure (we learned this three times with contrastive loss: parameter-space similarity ≠ functional similarity).

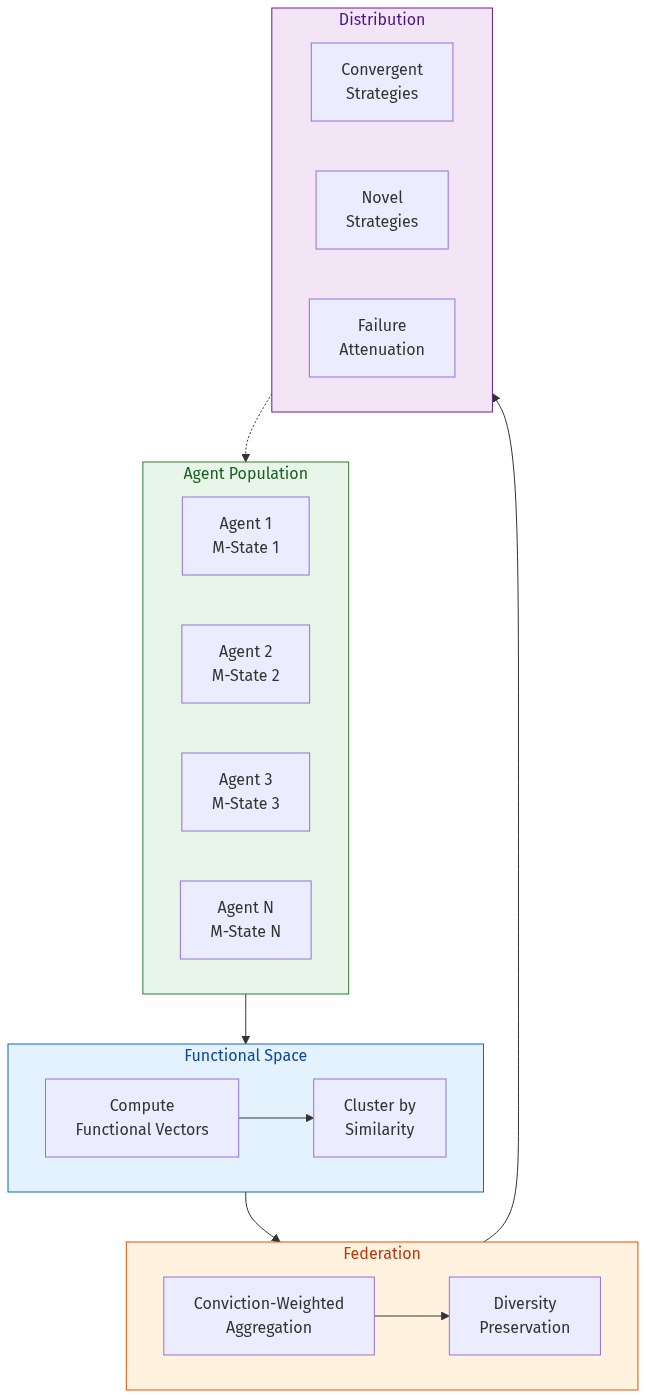

Proposed approach: Federate in functional space:

func_vec_i = concat[mean(h_ℓ @ M_A,ℓ @ M_B,ℓ^T) for ℓ in layers]

Each agent computes its functional vector (the actual Q-delta on a shared reference input). Cluster functional vectors to identify complementary vs. redundant M-states. Aggregate within clusters using weighted combination based on conviction strength. Distribute aggregated M-states back to agents.

This preserves the functional diversity of the population while sharing useful patterns.

3. The Specialist-Generalist Spectrum

HEXIS v16 discovered that the write function φ is a specialist encoder: M-states for novel, out-of-distribution domains collapse to near-identical representations (cosine ~0.996). This is consistent with cognitive science — implicit memory is shaped by repeated, domain-specific experience, not one-shot learning.

In a federated setting, this means within-domain federation should work well (agents with overlapping experience domains produce M-states in a shared subspace), while cross-domain federation may be meaningless (M-states from unrelated domains are already collapsed to a common point).

This suggests a hierarchical federation architecture: a global shared base with domain-specific clusters (legal, debate, medical), where M-states are federated within clusters but only structural insights transfer across domains.

4. Conviction-Weighted Federation

The conviction system from HEXIS v16 provides natural aggregation weights:

- Gate: Should this experience be shared at all? (relevance filtering)

- Strength: How strongly should this experience weight in aggregation?

- Novelty: Is this experience redundant with what the federation already knows?

M_federated = Σ (gate_i · strength_i · novelty_i · M_i) / Z

This creates a natural attention mechanism over the population's experiences: high-conviction, novel M-states get amplified; redundant or low-confidence ones are suppressed.

5. The Conditioning-Generation Gap in Federation

HEXIS's central open problem — strong conditioning on cross-entropy doesn't automatically translate to behavioral change — becomes even more acute in federation. If individual M-states already struggle to influence generation, will aggregated M-states fare better or worse?

Hypothesis: Federation might actually help. If the gap is partly caused by the model learning to route around any single M-state's perturbation, a population of diverse M-states presents a higher-dimensional perturbation that's harder to route around. The base model would need to develop compensation strategies for every possible combination, which may be computationally infeasible.

Federation as Political Theory

Here is where the technical architecture meets a much older set of questions. The federation mechanism — specifically, who generates the group reflection and how — maps directly onto competing theories of collective epistemics from political science. This isn't an analogy. The M-state dynamics under different synthesis mechanisms reproduce the formal structure of these theories, and their predictions are falsifiable within our framework.

Six Models of Collective Updating

Consider what happens when a group of agents has debated and now must federate their experience. The independent variable is the synthesis mechanism: who produces the group reflection that gets encoded into shared M-states?

| Model | Synthesis Mechanism | Convergence | Diversity | Power Dynamics |

|---|---|---|---|---|

| Habermasian | Neutral third model synthesizes | High | Erodes | Hidden ("best argument wins") |

| Condorcet | Both agents synthesize, averaged in M-space | Partial | Preserved | Equal weighting |

| Polarization | Winning/most-confident agent synthesizes | High | Collapses | Dominant M wins |

| Modus Vivendi | Intersection only (shared vs. individual φ passes) | Partial | Preserved | Negotiated |

| Epistemic Justice | Variable — whose reflection counts? | Variable | Variable | Synthesizer wins |

| Federalist | Rotating synthesis role | Low | Preserved | Rotates |

Each model makes specific predictions about what happens to M-state geometry over iterated federation.

The Habermasian Model: Discourse Ethics

A neutral third model reads the debate transcript and produces a synthesis — the "best argument" as determined by an impartial observer. All agents update their M-states from this single reflection.

The prediction: high convergence. Agents' M-states drift toward the neutral synthesizer's attentional priors. Diversity erodes — not through domination, but through the hidden power of whoever defines "impartial." The political science parallel is Habermas's ideal speech situation, where the force of the better argument is supposed to prevail. The critique, from Mouffe and others, is that "impartial" always smuggles in a perspective.

In M-state terms: the neutral model's own implicit biases — its attention patterns, what it considers salient — become the epistemic common ground whether or not they deserve to be.

The Polarization Model: Sunstein's Warning

The most confident agent — the one whose M-state produces higher mod_scale — generates the group reflection. Its framing dominates. φ encodes its perspective into the shared update.

# If M_A has higher mod_scale (is "louder"):

Group_R = LLM(transcript | M_A) # A's framing dominates

ΔM_B = φ(Group_R | M_A) # B gets pulled toward A

This is the danger condition. Over repeated debates, M-states converge toward whichever disposition produces higher-confidence outputs. Epistemic monoculture emerges not by design but by dynamics. Power asymmetry in M-space translates directly to epistemic dominance in collective updating.

If HEXIS with group reflection produces polarization rather than genuine pluralism, the AI pluralism thesis is undermined. We need to show this either doesn't happen or can be controlled.

The Modus Vivendi Model: Rawlsian Pluralism

This is probably the most realistic model of how healthy intellectual communities actually work. The group reflection encodes only the intersection — what both agents conceded or agreed on. Individual reflections encode persistent disagreements. Different things go to different memory layers.

Group_R = LLM(transcript,

"What did both agents concede or agree on?")

Individual_R_A = LLM(transcript | M_A,

"What did you maintain that they didn't accept?")

ΔM_A_shared = φ(Group_R) # moves toward other

ΔM_A_individual = φ(Individual_R_A | M_A) # maintains own

M_A_new = M_A + α_shared * ΔM_A_shared + α_individual * ΔM_A_individual

Agents converge on negotiated common ground while maintaining distinct positions on core disagreements. Partial overlap in M-space with persistent divergence on fundamental axes. The α weights between shared and individual updates become a tunable parameter controlling the pluralism-coordination tradeoff.

The political science parallel: Rawls's overlapping consensus, where citizens with incompatible comprehensive doctrines can coordinate on political principles without converging on worldviews. Pluralism is the stable endpoint, not an unstable transition state.

The Epistemic Justice Model

Who generates the group reflection determines what gets encoded. If it's always the same agent — or always the agent with the highest confidence score — then some perspectives are systematically amplified while others are suppressed. This is Fricker's epistemic injustice reproduced mechanistically: testimonial injustice (some agents' contributions are discounted) and hermeneutical injustice (some agents lack the framework to articulate their experience in ways the synthesizer recognizes).

The mod_scale asymmetry makes this concrete. If one disposition produces larger Q-perturbations, its framing of the group reflection will dominate φ's encoding.

The Federalist Model: Rotating Power

No single agent or mechanism controls synthesis. The synthesis role rotates. Each agent takes turns generating the group reflection, ensuring every perspective shapes the collective updating at some point.

The prediction: lowest convergence, highest diversity preservation. Power rotates rather than accumulating. The cost is slower collective learning — you don't converge on shared understanding as quickly. The benefit is robustness against the failure modes of every other model.

Why This Matters Beyond AI

These aren't just engineering choices. They're empirical tests of theories that political scientists have only been able to study through surveys and field experiments with all their confounds. M-state federation provides a controlled environment where you can hold everything constant — same agents, same topic, same number of turns — and vary only the synthesis mechanism.

The predictions are falsifiable:

- Habermas predicts the neutral-synthesizer condition produces the best epistemic outcomes.

- Sunstein predicts the confident-agent condition produces polarization.

- Rawls predicts the intersection-only condition best preserves pluralism while enabling coordination.

The Formal Foundations

Three results from social epistemology give this framework additional teeth.

Aumann's Agreement Theorem. If two rational agents share a common prior and their posterior beliefs become common knowledge, they must agree. In our system, agents start from the same uniform prior by design. Any persistent disagreement after sufficient debate must come from private information that hasn't been shared or from the agents not being fully Bayesian. Federation is the mechanism that makes posteriors approach common knowledge — but the synthesis mechanism determines how that approach happens and whether it preserves productive disagreement along the way.

The Condorcet Jury Theorem. If each agent is better than chance at identifying strong arguments and evaluates independently, group accuracy approaches 1 as the population grows. But deliberation complicates this: each agent's views evolve as they learn from others, and the independence assumption breaks. Research on deliberative polling shows that deliberation improves group performance only under certain conditions involving the decision rule, group size, and the presence of neutral agents. Our synthesis mechanism is precisely the decision rule variable that determines whether Condorcet's optimistic prediction holds.

The Martingale Problem. Recent work modeling LLM agents' beliefs as Dirichlet priors with Multinomial sampling proves that naive multi-agent debate induces a martingale over agents' belief in the correct answer — the expected belief remains unchanged across debate rounds. Debate alone, without a structured updating mechanism, is a random walk. This is exactly why the M-state federation layer is necessary: it provides the non-martingale updating that raw debate cannot.

Experimental Design

The synthesis mechanism is the independent variable of the most interesting experiment in this research program.

Seven conditions, holding everything else constant (same agents, same topic, same number of turns):

- No group reflection (individual M-state updates only)

- Neutral third model synthesizes

- Winning agent synthesizes (most confident)

- Losing agent synthesizes (most challenged)

- Both agents synthesize, averaged in M-space

- Intersection only (modus vivendi — dual φ passes)

- Rotating synthesis role

Measurements after N debates:

- M-state cosine similarity — convergence. Are agents becoming more alike?

- Position maintenance under new adversarial pressure — conviction. Do they hold beliefs when challenged on novel ground?

- Argument quality on novel topics — transfer. Did they learn generalizable reasoning, not just topic-specific talking points?

- JSD on held-out contested questions — diversity. Is the population's range of perspectives preserved?

The Full Evolutionary Cycle

Here's the complete loop, tying together all three phases:

Generation N:

-

Debate prep (Phase 1):

BeliefRefinerbuilds a belief tree for the resolution. Each belief carries a Bayesian credence.PerspectiveBuildercreates side-specific views. -

M-state encoding (Phase 2): Perspectives are passed through φ, producing M-states that perturb debaters' attention queries. Beliefs become dispositions, not instructions.

-

Contest (Phase 1): Debates run through the 4-stage pipeline. Flow tracking ensures genuine clash. The judge ensemble evaluates.

-

Selection (Phase 1 + 2):

BayesianUpdaterconverts debate outcomes into credence updates. Winning beliefs strengthen; losing beliefs weaken. Updates propagate through the tree via Jeffrey conditionalization. Judges accumulate experience-specific M-states. -

Reproduction (Phase 3): After many debates, the population's M-states are federated:

- Agents share functional vectors (not raw M-states or experiences)

- Clustering identifies convergent strategies (M-states multiple agents independently discovered — strong signal), divergent strategies (unique to top performers — potential innovations), and failure modes (associated with consistent losses — what to avoid)

- The synthesis mechanism determines the power dynamics of aggregation — Habermasian, Condorcet, modus vivendi, or federalist

- Conviction-weighted aggregation amplifies high-confidence, novel contributions

- Aggregated M-states are recompressed and distributed back

-

Inheritance: Each agent enters Generation N+1 with augmented M-states — their own experience plus the collective's refined understanding. The belief tree's credences carry forward. New debates start with more nuanced starting positions.

This is evolution for ideas. Each generation's ideas become more robust, more nuanced, more reflective of genuine dialectical engagement — not because any single agent got smarter, but because the population's collective experience shapes the next generation's perceptual apparatus. And the choice of synthesis mechanism determines what kind of intellectual community emerges: convergent and efficient, or pluralistic and robust.

Open Questions

Convergence vs. collapse. Does iterated federation cause M-states to collapse to a single point, destroying diversity? The modus vivendi model suggests a stable equilibrium is possible — partial overlap with persistent divergence — but this needs empirical validation. How do we maintain healthy epistemic diversity while still benefiting from shared experience?

Adversarial robustness. Can a malicious agent inject M-states that degrade the federation? The one-way compression of φ means you can't verify what experience produced an M-state. The causal necessity training acts as an implicit privacy mechanism — information not causally necessary for behavioral modulation gets discarded — but this is weaker than formal guarantees.

Scale. HEXIS has been validated on 35 episodic memories. Federated settings might involve thousands of M-states. Does the episodic memory bank scale, or do we need new retrieval architectures?

The conditioning-generation gap under federation. If individual M-states already struggle to bridge the gap between conditioning and generation, does aggregation help or hurt? Our hypothesis — that diverse M-state populations present higher-dimensional perturbations that are harder for the base model to route around — needs direct testing.

Evaluation. How do you measure whether federation improved a population of agents? Debate provides a natural evaluation: tournament-style competitions before and after federation, measuring both individual improvement and population diversity. The political science framework adds richer metrics: not just "did agents get better?" but "what kind of epistemic community emerged?"

Relationship to model merging. M-state federation resembles task arithmetic and model merging (Ilharco et al., 2023). The key difference is that M-states are produced by a learned write function rather than gradient descent, which may give them different compositional properties.

Next Steps

We're currently designing the experimental framework for federated M-states:

- Baseline: Train 8 debate agents independently, measure individual and population-level debate quality

- FedAvg: Naive parameter-space averaging of M-states

- FedFunc: Functional-space federation with clustering

- FedConviction: Conviction-weighted functional federation

- Political science conditions: The 7-condition experiment varying synthesis mechanism

- Ablation: Federation with and without diversity preservation constraints

If the basic framework works, the long-term vision is a federated debate league: a population of agents that continuously debate, develop M-states, federate their experience, and improve collectively — with each agent maintaining its own dispositional identity while benefiting from the population's collective experience. The choice of federation mechanism determines whether that league looks like a Habermasian seminar, a Rawlsian democracy, or something else entirely.

You're not just building a memory mechanism. You're building an empirical testbed for theories of collective epistemics that political scientists have only been able to study through surveys and field experiments with all their confounds. That's a much bigger claim than "better persona conditioning" — and the M-state substrate is what makes it possible.

This is Part 3 of our research blog series. See also: The Dialectical Agent and HEXIS: Implicit Memory.